Introduction

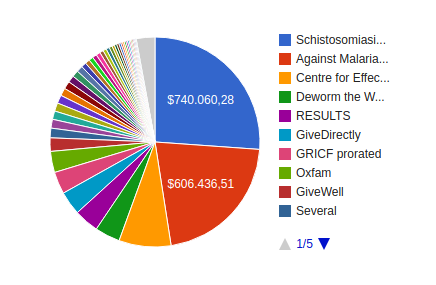

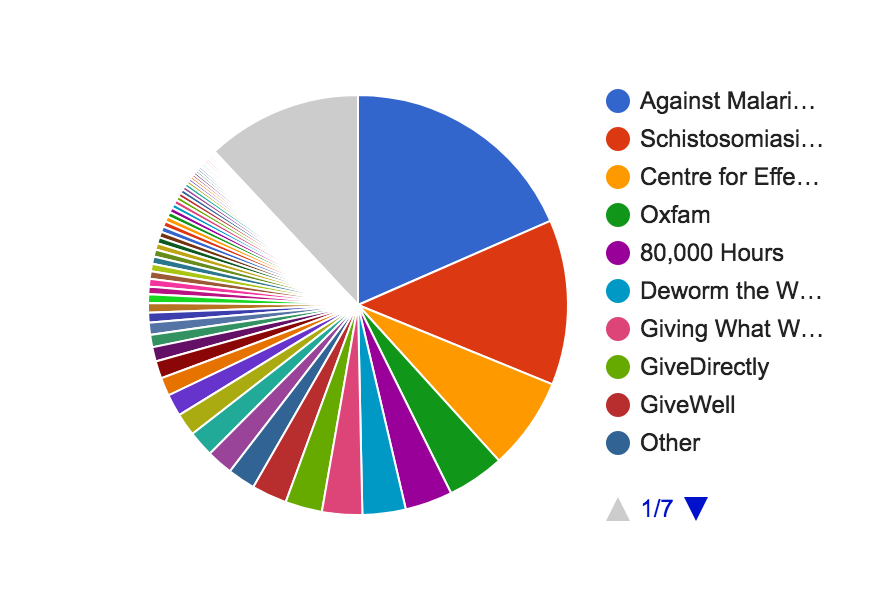

I think this change between September 2014 and today is a good thing. We seem to be investing more strongly into preparatory work as opposed to only placing the final bricks in almost complete impact edifices. Since these are, I think, totals over all time, the current allocation (say, this year) must be even further slanted toward organizations like CEA, 80,000 Hours, and hopefully Wild Animal Suffering Research than is apparent from the total.

The reason I’m happy to see it is that it shows that we may be solving one or more of the challenges of: personal risk aversion, coordination, and understanding who generates the bulk of the impact. I’ll cover the first two only briefly, because it seems to me that they’re known, and mostly focus on the last.

-

Decreasing marginal utility of money for generating happiness is a good reason to be somewhat risk averse personally, but while we can pretty clearly notice this decrease throughout the area of $20,000 to $80,000 or so, it only becomes noticeable only in much higher areas for altruistic investments – perhaps three orders of magnitude higher. So when we transfer our intuitions about risk aversity from personal finance to donations, we’ll likely lose out on expected value at no gain.

- A different perspective on this is that when we strive for an agent-relative goal such as personal wealth maximization, we also personally bear all the risk. But when we strive for an agent-neutral goal such as maximizing happiness or minimizing suffering, the successes and failures are shared to the degree that the actors coordinate their attempts: If there are 1000 plausible ideas of which a random one will succeed, and if 100 EAs coordinate to all try out different ones, 10 trials per EA, then the success is not the success of the one who happens to get lucky but the success of all of them collectively. It was a perfectly riskless endeavor for them.

-

GiveWell currently recommends seven charities, of which three are really well known. But Open Phil has made grants to almost 180 charities; and add to that a bunch of EA charities they haven’t made grants to. These are probably charities who can plausibly outdo GiveDirectly in direct impact. But when people talk about deciding whether to invest into low risk–low reward or high risk–high reward interventions, it sounds as if they’re deciding between some roughly equal options or even with a slant toward low risk and low reward. The number of charities – though not necessarily the total funding gap just yet – indicates more of a 1:20 split. In effect, the probably less than 50% of donations that go to high risk–high reward interventions seem to vanish compared to the donations that focus on GiveWell top charities. This argument alone is not sufficient to show that this is bad, but it shows that if it is bad (and I think so for other reasons), then we are (or were) not so much falling for the streetlight effect but for a coordination problem.

-

Finally, I think that a lot of people – though unfortunately probably few of the people I can reach with this article – are likely to undervalue preparatory work compared to final-brick type of work. This is what I will explain further in the following.

The Iceberg

When a friend of mine founded a charity, she did year-long research, got pro-bono counseling from specialist friends of hers, got seven people together to be legally able to found the organization, and got one of them to provide the office space for the meeting. Then a lawyer, who charged a particularly friendly rate, made the final strokes to the by-laws, so we could all sign them. (I was one of those seven people.)

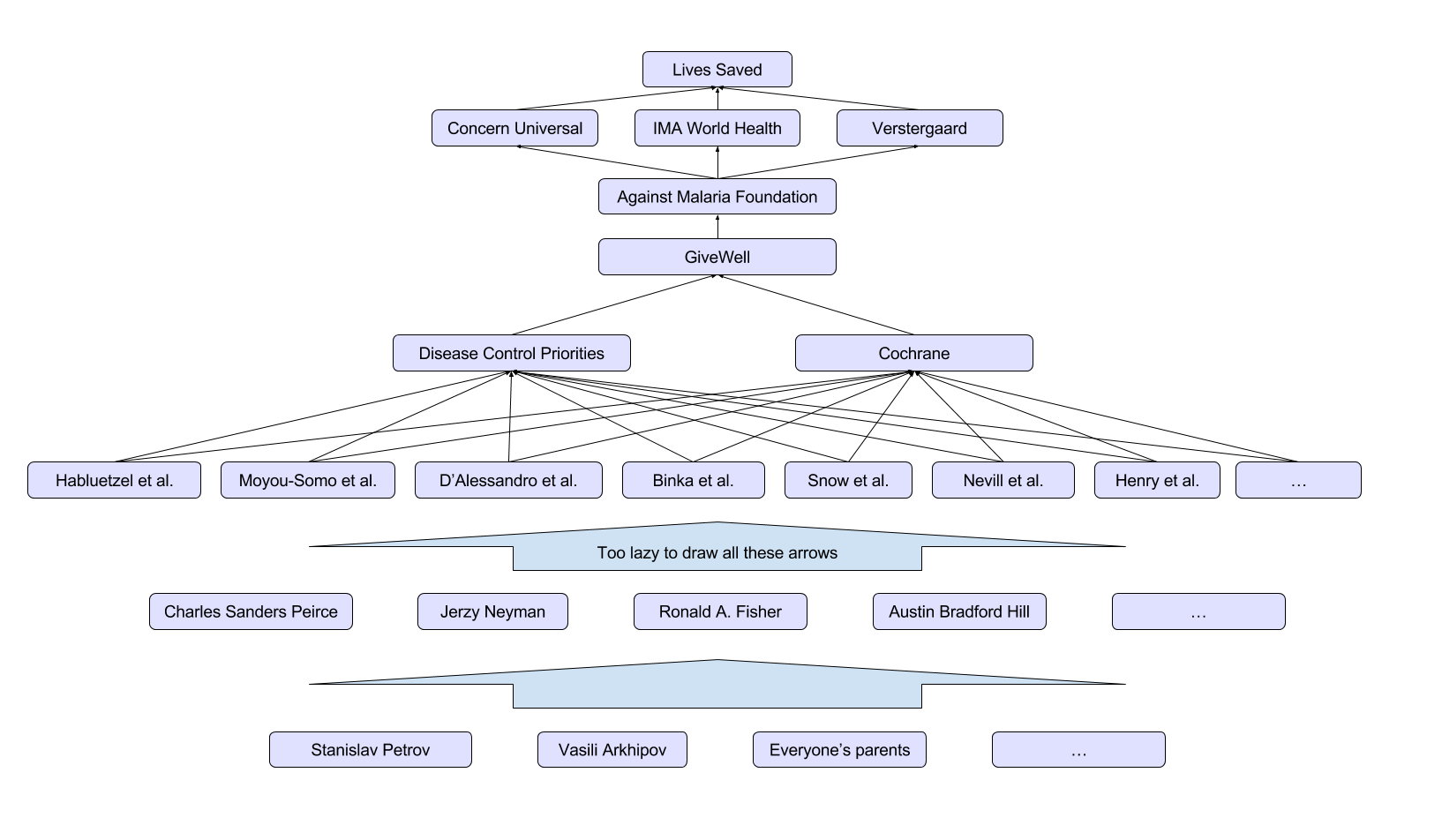

This might be an analogy for the work of the Against Malaria Foundation. Since AMF steps in and subsequently people don’t die, it might seem like AMF is to be credited with saving those people’s lives. Especially when it’s actually the case that they would’ve died without AMF and didn’t die with AMF, it would seem that way. But while AMF certainly made the difference between them dying or not dying, everyone else, without whom these people would’ve died too, also needs to be credited: distribution partners, net producers, donors, organizations like GiveWell who funnel donations to AMF, researchers who found bednets to be a good idea, everyone who prevented the third world war, everyone’s parents, et al.

In the above analogy, the distribution partners or the net producers may be like the lawyer; AMF, perhaps, is like the cofounder who provided the room and one of the signatures; my friend is like GiveWell and the Disease Control Priorities (DCP2) study together; and the friends she interviewed may’ve been like the 20-odd teams that conducted RCTs on malaria prevention with bednets.

The particular impact of the founding of her charity or conducing a net distribution is thus a joint achievement of all the above people and many more. So there are cases, perhaps most of them, where a lot of effort had to first be invested before one charity could plug some final hole or place some final brick and make all the impact come to fruition. The bulk of the iceberg probably lies below the GiveWell-recommended tip.

Research

Much of the bulk of the iceberg is research, which has the interesting property that often negative results – if they are the result of a high-quality, sufficiently powered study – can be useful. If the 100 EAs from the introduction (under 1.a.) are researchers, they know that one of the plausible ideas got to be right, and 99 of them have already been shown not to be useful, then the final EA researcher can already eliminate 99% of the work with very little effort by relying on what the others have already done. The bulk of that impact iceberg was thanks to the other researchers. Insofar as research is a component of the iceberg, it’s a particularly strong investment.

Additive Delays

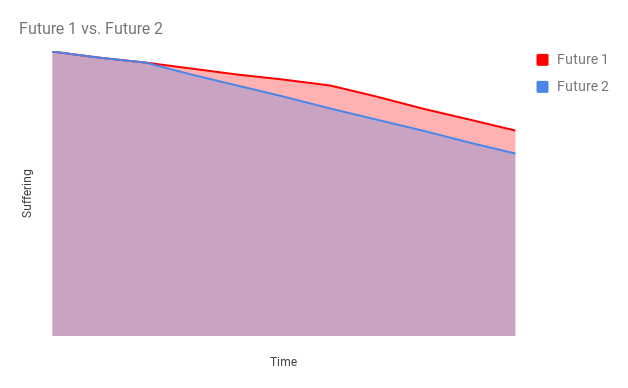

There’s another reason for why the bulk of the iceberg is likely big. Let’s conceive of impact as a reduction of the integral over time of something we care to eliminate – say, suffering. An intervention like bednets will slightly reduce the slope of the curve (perhaps for a limited time) thereby reducing the aggregate suffering over all time. The earlier this reduction happens, the better.

Each step closer to the impact is dependent upon one or more previous steps, just like GiveWell’s contributions were dependent on studies, electronic money transfer, the invention of LLINs, Sci-Hub, etc. A delay of one of them – say, LLINs haven’t been invented – means that all dependent steps closer to the impact and all steps that, in turn, depend on them, are delayed as well. And among all the dependencies on one “level,” the one that has the longest delay is the one that determines the delay. The same happens at all further levels in the dependency tree, and all these delays add up.

In practice, I would not expect clean levels like that to emerge, but insofar as there are inventions or discoveries that are highly necessary for some impact to emerge, one strategy that would follow from this perspective would be to try to speed up the development of the necessary condition that one expects will take longest to come about.

A counterargument might be that in some environments, something quick and simple can serve as a proof of concept to attract investment or revenue that can then enable the development of the more difficult innovation.

The Shapley Value

There’s really no one true way of assigning partitions of this positive impact to the different contributors, but economists have come up with some sane-sounding axioms (informally summarized below) to describe one conception of justice and a method that uniquely assigns partitions accordingly. That is the Shapley value. (Another method of allocation with different goals and results is the core.)

-

Efficiency: it allocates the whole impact.

-

Symmetry: equal contributors receive equal shares.

-

Linearity: if you consider two cooperative efforts as one, each contributor’s share in the joint case will be the sum of the contributor’s shares in the split case.

-

Zero/dummy/null player: someone who contributes nothing, get none of the impact assigned.

Note that this is wholly unnecessary for prioritization. We can choose our actions so to maximize the total impact without applying any algorithm that splits it up again and assigns it to individual contributors. I have, in the past, argued against even attempting such an allocation because all algorithms I had been aware of had unacceptable implications. I probably still do, because the Shapley value is probably hard to calculate in most situations effective altruists will find themselves in. (Not so, however, in advertisement.)

When we’re talking about replaceability in EA, we’re usually taking some action and some likely alternative involving inaction and comparing them, e.g., comparing the world with a world without AMF but otherwise identical. (80,000 Hours has touched on it here.) When we’re talking about comparative advantage, however, we’re considering a number of counterfactuals and a number of different actions and reactions to find the maximally impactful variant. I don’t know for sure, but it seems to be like making full use of one’s comparative advantage should be equivalent to maximizing one’s Shapley value.

A consideration of replaceability (defined in this limited way) alone, however, does not have the same properties. Say, we want to determine the contribution of C in a cooperation of A, B, and C – denoted as the set {A, B, C}. Replaceability would only consider the case where {A, B} cooperate and the case where {A, B, C} all cooperate and compare them. That may not be sufficient. Rather, to determine the Shapley value of C, we need to consider all eight subsets of {A, B, C} and calculate the mean of C’s contributions in all cases that involve C.

Another difficulty with actually calculating a Shapley value is that in practice cooperation usually happens between groups of very large cardinality (just think of all the contributors in the graph above) where each contributor contributes some at least infinitesimal part. There is a reformulation of the Shapley value that captures this, but I don’t know how helpful it’ll be even just for illustration purposes.

The main take-away for me is to make sure that I sufficiently value also nonobvious preparatory work that has made some impact possible and to value preparatory work that is currently being done that may make the difference between impact materializing earlier, only much later, or never at all.

Comments